Pattern 1

Thread-pool state leakage

Symptom. A test that mutates a module-level Map or counter passes alone and fails when the file runs alongside another that imports the same module.

Root cause. Vitest's default pool is threads: every worker is a long-lived Node thread that loads modules once and holds onto their top-level state. Two test files that import the same module share its singletons inside that thread. The first file mutates a cache or a counter; the second imports the module and inherits the dirty state. Switching to the forks pool gives you per-file process isolation but costs startup time per file.

// counter.ts

export const seen = new Set<string>();

export function record(id: string) { seen.add(id); }

// a.test.ts

import { record, seen } from "./counter";

test("records once", () => {

record("u-1");

expect(seen.size).toBe(1); // passes

});

// b.test.ts (same worker thread)

import { seen } from "./counter";

test("starts empty", () => {

expect(seen.size).toBe(0); // fails: 1

});

Fix. Treat module-scope mutable state as a smell in tested modules. If you cannot remove it from production code, reset it in beforeEach, or pin the file to a fresh worker with poolMatchGlobs. For full per-file isolation, set pool: 'forks' in vitest.config.ts and accept the cold-start cost.

// vitest.config.ts

export default defineConfig({

test: {

pool: "forks",

poolOptions: { forks: { singleFork: false } },

},

});

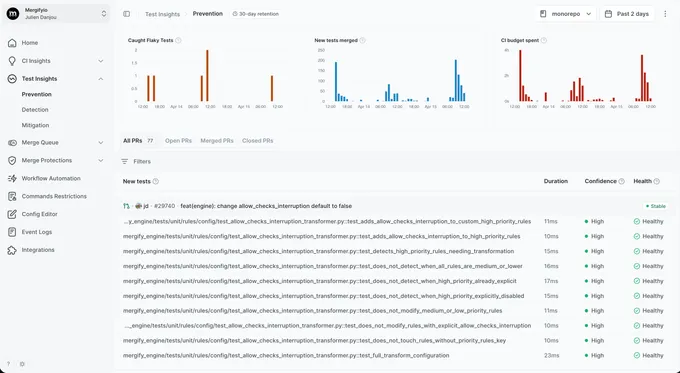

With Mergify. Test Insights catches the cross-file signature: file B only fails when file A ran on the same worker thread. The dashboard tags the dependency so you know thread reuse is the cause, not a test bug in B.

Pattern 2

Symptom. A test that imports a module followed by `vi.mock()` of that module either runs the real implementation, or sees an undefined value where the mock should be.

Root cause. vi.mock() is hoisted above imports at transform time. The factory closure cannot reference variables declared later in the file because hoisting moves the call, not the surrounding scope. The transform happens through esbuild asynchronously, so the same code sometimes resolves in a different order than reading the file top to bottom suggests.

import { fetchUser } from "./api";

const stubUser = { id: "u-1", name: "Rémy" };

// Hoisted to the top. stubUser is undefined when the factory runs.

vi.mock("./api", () => ({

fetchUser: vi.fn().mockResolvedValue(stubUser),

}));

test("returns the stub", async () => {

const u = await fetchUser();

expect(u.name).toBe("Rémy"); // TypeError: cannot read 'name' of undefined

});

Fix. Use vi.hoisted() to declare values that the mock factory needs, so they are hoisted alongside the mock. Or move the value into the factory body so closure capture is not in play.

const { stubUser } = vi.hoisted(() => ({

stubUser: { id: "u-1", name: "Rémy" },

}));

vi.mock("./api", () => ({

fetchUser: vi.fn().mockResolvedValue(stubUser),

}));

With Mergify. Hoisting bugs show up in Test Insights as a consistent failure when the mocked file runs cold (first run on a fresh worker) and a pass when it runs warm. The dashboard groups failures by worker freshness so the pattern is visible.

Pattern 3

isolate:false module cache sharing

Symptom. A perf engineer flips `isolate: false` for a 30% speedup and three tests start failing in the order they happen to run.

Root cause. isolate: true is the default and it gives every test file a fresh module graph. Setting it to false reuses the module cache across files in the same worker, which is fast but means top-level side effects, module singletons, and any patched module survive into the next file. Tests that pass under isolation can fail without it because the prior file's mutation is still there.

// vitest.config.ts

export default defineConfig({

test: { isolate: false }, // 30% faster, breaks isolation

});

// flags.ts (production code with module-level cache)

let cached: Flags | null = null;

export function flags() {

if (!cached) cached = loadFlags();

return cached;

}

// a.test.ts mutates the cache via a helper, b.test.ts inherits the result

Fix. Keep isolate: true unless the suite is purely functional. If you really need the speed, audit every imported module for top-level mutable state and add explicit reset hooks. Do not flip the flag once and assume the suite still proves what it used to.

With Mergify. Test Insights compares pass rates before and after a config change. When isolate flips and three tests start losing confidence, the dashboard surfaces them grouped by the commit that introduced the regression.

Pattern 4

Snapshot races inside test.concurrent

Symptom. Inline or file snapshots in a `test.concurrent` block disagree on rerun: sometimes one test owns the snapshot, sometimes another does, and the diff flips with every commit.

Root cause. test.concurrent runs tests in parallel inside a single file. Snapshots are written to a single .snap file keyed by test name; concurrent tests writing different fixtures into overlapping keys can produce a file that depends on which test's write landed last. Inline snapshots avoid the file-level race but their auto-update step still runs in parallel and can serialize the wrong test's output.

describe.concurrent("invoice rendering", () => {

test("dollars", () => {

expect(format(1099, "USD")).toMatchSnapshot();

});

test("euros", () => {

expect(format(1099, "EUR")).toMatchSnapshot();

});

// both tests touch the same .snap file at the same time on update

Fix. Keep snapshot tests sequential. Drop describe.concurrent on suites that snapshot, or split the snapshotting tests into a dedicated non-concurrent block.

describe("invoice rendering", () => {

test("dollars", () => {

expect(format(1099, "USD")).toMatchSnapshot();

});

test("euros", () => {

expect(format(1099, "EUR")).toMatchSnapshot();

});

});

With Mergify. Test Insights detects the snapshot churn pattern: the same test file produces a different .snap diff on consecutive runs of the same SHA. The dashboard surfaces the file as snapshot-unstable so the concurrency root cause is obvious.

Pattern 5

Fake-timer leakage between tests

Symptom. A test that calls `vi.useFakeTimers()` passes alone, and the next test in the file fails with a setTimeout firing at the wrong moment.

Root cause. vi.useFakeTimers() mutates a worker-scoped timer mock that survives the test unless explicitly restored. Vitest does not auto-restore unless restoreMocks: true is set, and even then the timer state is separate from the mock state. The next test inherits whatever timers were queued and not flushed.

test("schedules a refresh", () => {

vi.useFakeTimers();

setInterval(refresh, 1000);

// no cleanup; fake timers and the queued interval leak

});

test("renders the dashboard", () => {

render(<Dashboard />);

// refresh fires under fake timers from the previous test

});

Fix. Pair every vi.useFakeTimers() with vi.useRealTimers() in afterEach, and clear pending timers before the switch. If you use fake timers everywhere, set them once in a setup file and stop flipping per-test.

afterEach(() => {

vi.clearAllTimers();

vi.useRealTimers();

});

With Mergify. Test Insights reruns the suspect test on its own. When the same SHA passes alone but fails after a specific neighbor, the test gets flagged as ordering-sensitive and quarantined while you fix the timer cleanup.

Pattern 6

globals:true config drift across files

Symptom. A setup file uses `expect` or `vi` without importing them, and one test file in five hundred fails with `ReferenceError: vi is not defined`.

Root cause. globals: true injects expect, vi, describe, and friends into every test file the runner picks up. Setup files referenced via setupFiles get the injection too. A second project config (a Storybook subfolder, a Cypress component test config) that omits the flag breaks the moment its setup file imports the shared one.

// vitest.config.ts

export default defineConfig({ test: { globals: true } });

// shared/setup.ts (relies on globals)

afterEach(() => vi.clearAllMocks());

// vitest.storybook.config.ts (forgot the flag)

export default defineConfig({

test: { setupFiles: ["./shared/setup.ts"] },

}); // ReferenceError: vi is not defined

Fix. Either commit to globals everywhere by setting the flag in a shared base config that every project extends, or commit to explicit imports everywhere and remove the flag. Mixing the two modes across configs is the trap.

// vitest.base.ts

export const baseConfig = { test: { globals: true } };

// vitest.config.ts

export default defineConfig(mergeConfig(baseConfig, { test: { include: [...] } }));

// vitest.storybook.config.ts

export default defineConfig(mergeConfig(baseConfig, { test: { include: [...] } }));

With Mergify. Globals drift fails consistently for the affected config, not intermittently, so Test Insights flags the suite as broken rather than flaky. The dashboard groups the failures under the offending config so you know which one drifted.

Pattern 7

Watch-mode versus CI cache divergence

Symptom. Tests pass in `vitest --watch` on your laptop and fail on the first CI run after a fresh checkout. They pass on the rerun.

Root cause. Watch mode keeps the Vite dev server alive between runs and reuses its module graph and dependency cache. CI starts cold every time. A test that depends on a module being loaded in a particular order, or on a transformer cache being warm, behaves differently across the two. The same test file that always passes in watch can fail on the first cold run when an async transform race tips the wrong way.

// component.test.tsx

import { Component } from "./Component";

import { setup } from "./testHarness"; // top-level await + side effects

// In watch mode: setup ran once, its side effects are cached.

// On cold CI: setup runs concurrently with Component import; race is real.

test("renders", () => {

render(<Component />); // sometimes hits a half-initialized harness in CI

Fix. Move side effects into beforeAll or a setupFiles hook so the test runner controls the order. Avoid top-level await in test-only modules. Run the suite cold locally with vitest run --no-cache to reproduce CI behavior.

With Mergify. Test Insights tracks pass rates separately on the default branch (cold) and on PR runs (often warmer). When a test is reliable on PR runs and unreliable on main, the dashboard surfaces the cold-vs-warm signature.

Pattern 8

Vitest retry config hiding real bugs

Symptom. Your pipeline is green. A user reports a bug your tests should have caught.

Root cause. retry: 3 in the config re-runs every failing test up to three times and reports the last result. A real race that loses on attempt 1 and wins on attempt 2 gets reported as green. The bug is still there. The pipeline has decided not to look at it.

// vitest.config.ts (please don't)

export default defineConfig({

test: { retry: 3 },

});

Fix. Do not retry at the framework level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information the retry config throws away. Quarantine kicks in once the pattern is clear.