Pattern 1

dependsOnGroups graph collapses on a single failure

Symptom. A run reports 1 failure and 47 skipped tests, all of which were waiting on the failing test through a chain of `dependsOnGroups`.

Root cause. @Test(dependsOnGroups = "auth") tells TestNG to skip a test (and everything that depends on it) when an upstream group fails. The intent is to avoid noise from cascading errors, but it also hides regressions: any flake in an upstream test silently disables a chunk of the suite. The pipeline is "green except for one test" when in reality the suite ran 12% of its checks.

public class AuthTest {

@Test(groups = "auth")

public void login() {

assertTrue(authClient.login("user", "pw")); // racy under load

}

}

public class CheckoutTest {

@Test(groups = "checkout", dependsOnGroups = "auth")

public void canCheckout() { ... }

@Test(groups = "checkout", dependsOnGroups = "auth")

public void canApplyDiscount() { ... }

// every checkout test silently skips when login flakes

}

Fix. Use dependsOnGroups only when downstream tests are genuinely meaningless without the upstream (a true precondition, not a convenience). For "tests that share setup", lift the setup into @BeforeClass so each test fails on its own merits when something goes wrong.

public class CheckoutTest {

@BeforeClass

public void authenticate() {

assertTrue(authClient.login("user", "pw"), "auth precondition");

}

@Test

public void canCheckout() { ... }

@Test

public void canApplyDiscount() { ... }

}

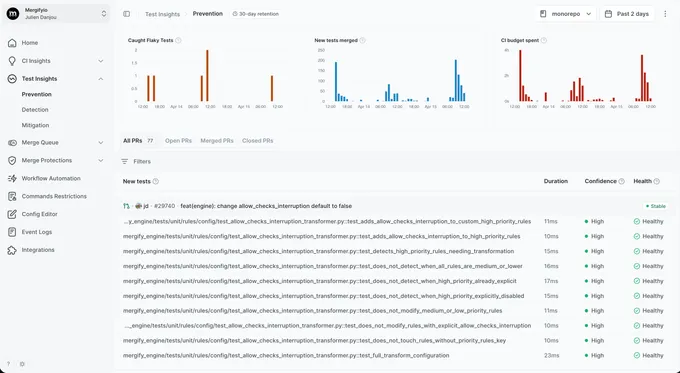

With Mergify. Test Insights treats SKIPPED tests caused by upstream failures as their own signal, distinct from explicit skips. The dashboard surfaces tests that are skipped on every run that has an upstream flake, so the dependency cascade is visible.

Pattern 2

parallel=methods racing on @BeforeMethod state

Symptom. A test class that ran green for years starts failing when `parallel="methods"` is enabled in `testng.xml`, with assertions seeing values from a sibling test.

Root cause. Under parallel="methods", TestNG runs every method on its own thread but reuses the same test instance across them. A field set in @BeforeMethod is shared between concurrent methods. @BeforeMethod fires before each, in arbitrary order, so the field reads out a value some other thread set milliseconds earlier.

public class CounterTest {

private int counter; // shared across parallel methods on the same instance

@BeforeMethod

public void reset() { counter = 0; }

@Test public void incrementsToOne() {

counter++;

assertEquals(counter, 1); // sometimes 2 when sibling raced ahead

}

@Test public void alsoIncrementsToOne() {

counter++;

assertEquals(counter, 1);

}

}

Fix. Prefer per-method local variables over instance fields. When state must persist across methods, switch to a thread-confined data structure (ThreadLocal) or move parallelism up to parallel="classes", which gives each class its own instance per thread.

public class CounterTest {

@Test public void incrementsToOne() {

int counter = 0;

counter++;

assertEquals(counter, 1);

}

}

With Mergify. Test Insights tags failures that only appear with `parallel="methods"` and pass under sequential reruns as parallelism-sensitive. The dashboard surfaces the parallel-only signature so the shared-instance root cause is the obvious lead.

Pattern 3

@DataProvider returning shared mutable state

Symptom. A data-driven test passes in CI for some inputs and fails for others, and re-running with the failing input alone passes.

Root cause. @DataProvider returns a 2D array that TestNG iterates over to call the test once per row. If the rows hold mutable objects, every iteration mutates the same instance: row 0 changes a shared User, row 1 sees the post-row-0 state. Under parallel="methods", the rows even race on the same object across threads.

private static final User SHARED = new User("Rémy");

@DataProvider(name = "users")

public Object[][] users() {

return new Object[][] { {SHARED}, {SHARED}, {SHARED} };

}

@Test(dataProvider = "users")

public void renamesUser(User u) {

u.setName(u.getName() + "-renamed");

assertTrue(u.getName().endsWith("-renamed"));

// row 1 sees "Rémy-renamed-renamed", row 2 sees "...-renamed-renamed-renamed"

}

Fix. Build a fresh instance per row inside the provider. For expensive setup, build a factory and call it per row.

@DataProvider(name = "users")

public Object[][] users() {

return new Object[][] {

{ new User("Rémy") },

{ new User("Rémy") },

{ new User("Rémy") },

};

}

With Mergify. Test Insights groups failures by test method and parameter index. When iteration N of a parameterized method fails consistently and N-1 passed, the dashboard surfaces the iteration-order signature so the shared-state mistake is easy to find.

Pattern 4

@Listeners with global side effects

Symptom. A test passes alone and fails inside the suite with assertions about logging that no one in the failing test wrote, after a custom `@Listeners` was added in an unrelated test class.

Root cause. @Listeners is class-level metadata that registers an ITestListener for the entire suite, not just the annotated class. A listener that mutates static state (a logger, a metrics counter, a thread-local) keeps that state alive for every test in the run, including tests that never opted in. The first test to fail after the listener was added looks unrelated to it.

@Listeners(MetricsListener.class)

public class CheckoutTest { ... }

// MetricsListener.java

public class MetricsListener implements ITestListener {

public void onTestStart(ITestResult r) {

Metrics.global().increment("test_started");

}

}

// elsewhere

public class HealthcheckTest {

@Test public void metricsEmpty() {

// expected: 0. actual: N (CheckoutTest's listener fired across the suite)

assertEquals(Metrics.global().get("test_started"), 0);

}

}

Fix. Scope listeners explicitly. Use testng.xml <listeners> for genuinely suite-wide listeners and document them. Avoid mutating static state from listeners; if you must collect cross-test data, use a sink the test can read deterministically.

With Mergify. Test Insights groups the downstream failures by the test that introduced the listener. When five seemingly unrelated tests fail only after a class with a new @Listeners is added, the dashboard surfaces the upstream culprit.

Pattern 5

IRetryAnalyzer hiding real bugs

Symptom. Your suite passes consistently. A user reports a bug that should have been caught by the test that ran three times yesterday before passing on attempt 3.

Root cause. IRetryAnalyzer reruns failing tests up to N times and reports the last result. A real race that loses on attempt 1 and wins on attempt 2 gets reported as PASSED. The bug is still there. The pipeline has decided not to look at it. The retry log line in the report is the only trace, and few teams alert on it.

public class FlakyRetry implements IRetryAnalyzer {

private int count = 0;

@Override public boolean retry(ITestResult result) {

return count++ < 3;

}

}

public class CheckoutTest {

@Test(retryAnalyzer = FlakyRetry.class)

public void chargesCard() {

// intermittent timing bug; passes 2 of 3 times

}

}

Fix. Do not retry at the framework level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information IRetryAnalyzer's PASSED status discards. Quarantine kicks in once the pattern is clear.

Pattern 6

ITestContext attribute leakage

Symptom. A test that reads `context.getAttribute("sessionId")` passes alone and fails inside the suite with the wrong session, set by a sibling test.

Root cause. ITestContext attributes are scoped per <test> in testng.xml. A test that sets context.setAttribute("sessionId", id) stores that value for every other test in the same <test> block. Two tests that both rely on a "current session" attribute walk over each other every run.

@Test

public void logsIn(ITestContext context) {

String sessionId = authClient.login("user").getSessionId();

context.setAttribute("sessionId", sessionId);

}

@Test

public void buysAThing(ITestContext context) {

String sessionId = (String) context.getAttribute("sessionId");

// sometimes the wrong session if a sibling test logged in concurrently

checkoutClient.buy("widget", sessionId);

}

Fix. Pass dependencies through method parameters or instance fields scoped to the test instance. Reserve ITestContext attributes for read-only metadata that listeners produce.

public class CheckoutTest {

private String sessionId;

@BeforeMethod

public void login() {

sessionId = authClient.login("user").getSessionId();

}

@Test

public void buysAThing() {

checkoutClient.buy("widget", sessionId);

}

}

With Mergify. Test Insights catches the cross-test signature: a test only fails after a specific other test has run, with assertions about session, user, or token that the failing test never set. The dashboard surfaces the predecessor so the misused context is the obvious lead.

Pattern 7

Hard timeouts that flake on slow runners

Symptom. A test annotated `@Test(timeOut = 2000)` passes locally and fails on the slower CI runner with `Method ... didn't finish within the time-out 2000ms`.

Root cause. timeOut on a TestNG method is a wall-clock check. A test that takes 1900ms on a fast laptop runs over 2000ms on a throttled CI worker and fails for being too slow, not for being wrong. The threshold is brittle by design: it cannot tell scheduler jitter from a real hang.

@Test(timeOut = 2000)

public void connectsToRedis() {

// 1.8s locally; 2.1s on the slowest CI runner

redisClient.connect();

assertEquals(redisClient.ping(), "PONG");

}

Fix. Either pick a wall-clock budget that absorbs CI variance (3x the local p95) or replace the timeout with an assertion that the operation made progress. For genuine hang detection, configure CI to kill the JVM at the suite level rather than per test.

@Test

public void connectsToRedis() {

redisClient.connect();

assertEquals(redisClient.ping(), "PONG");

}

// JVM-level kill: surefire <forkedProcessTimeoutInSeconds>120</...>

With Mergify. Test Insights links timeout failures to the CI runner type that produced them. When a test only times out on the slowest pool and never on the laptop pool, the dashboard surfaces the resource sensitivity so the wall-clock dependency is the obvious place to look.

Pattern 8

Surefire rerunFailingTestsCount hiding real bugs

Symptom. Your build is green. A user hits a bug that the failed-on-first-attempt test was supposed to catch.

Root cause. Surefire's rerunFailingTestsCount applies to TestNG just like JUnit: failing tests rerun up to N times and the build reports the last result. Combined with TestNG's own IRetryAnalyzer, you can have two layers of retry, each one hiding a different category of bug. The build is green; the production database is not.

<plugin>

<artifactId>maven-surefire-plugin</artifactId>

<configuration>

<rerunFailingTestsCount>3</rerunFailingTestsCount>

<suiteXmlFiles>

<suiteXmlFile>testng.xml</suiteXmlFile>

</suiteXmlFiles>

</configuration>

</plugin>

Fix. Do not retry at the build level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information `rerunFailingTestsCount` and IRetryAnalyzer throw away. Quarantine kicks in once the pattern is clear.