Pattern 1

Parallelizable scope confusion across fixtures

Symptom. Tests that pass in serial mode start failing intermittently after `[assembly: Parallelizable(ParallelScope.Children)]` is enabled, with assertions about state another fixture mutated.

Root cause. [Parallelizable] takes a scope (Self, Children, Fixtures, All, None). The scopes compose by aggregation: assembly-level Children + fixture-level All means tests in that fixture run alongside tests in every other fixture too. Anything those threads share (a static field, a singleton service, an environment variable) becomes a race.

[assembly: Parallelizable(ParallelScope.Fixtures)]

public class FeatureFlagTests {

public static bool _enabled;

[SetUp] public void Setup() { _enabled = true; }

[Test] public void NewBillingPathIsActive() {

Assert.That(_enabled, Is.True);

}

}

public class LegacyBillingTests {

[Test] public void DefaultsToLegacyPath() {

// FeatureFlagTests races ahead and sets _enabled = true; assertion fails

Assert.That(FeatureFlagTests._enabled, Is.False);

}

}

Fix. Pick parallelism explicitly. Annotate fixtures that share static state with [NonParallelizable], or refactor to remove the static state. For per-thread state, use AsyncLocal<T> or ThreadLocal<T> rather than plain statics.

[NonParallelizable]

public class FeatureFlagTests {

private bool _enabled;

[SetUp] public void Setup() { _enabled = true; }

[Test] public void NewBillingPathIsActive() {

Assert.That(_enabled, Is.True);

}

}

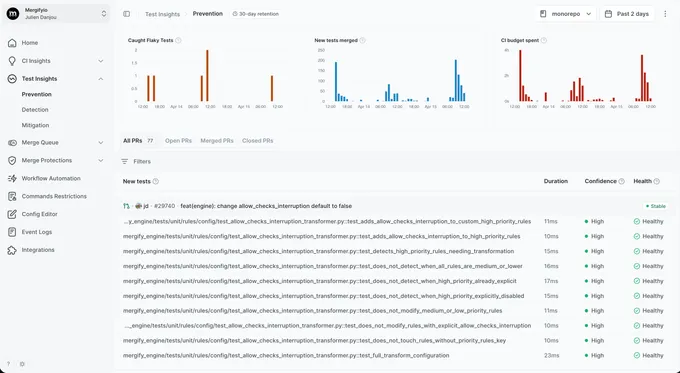

With Mergify. Test Insights tags failures that only appear under parallel runs as parallelism-sensitive. The dashboard surfaces the parallel-only signature so the shared-static root cause is the obvious lead.

Pattern 2

Static field state surviving the test run

Symptom. A test that mutates a static config dictionary passes alone and breaks an unrelated fixture with a value the second test never set.

Root cause. Even without parallelism, static fields live for the AppDomain. NUnit creates a fresh instance of each test fixture per test by default, but the static state survives. A test that sets FeatureFlags.Enabled["x"] = true leaves it set for every test that runs after, regardless of fixture or class.

public static class FeatureFlags {

public static Dictionary<string, bool> Enabled { get; } = new();

}

public class FeatureFlagTests {

[Test] public void NewBilling() {

FeatureFlags.Enabled["NEW_BILLING"] = true;

Assert.That(FeatureFlags.Enabled["NEW_BILLING"], Is.True);

}

}

public class PricingTests {

[Test] public void DefaultPricing() {

// expects Enabled empty; sees ["NEW_BILLING" => true] from sibling

Assert.That(Pricing.For("pro"), Is.EqualTo(99));

}

}

Fix. Reset static state in [TearDown] on a base test class so every test starts clean. Better: refactor the static into an injected service so each test owns its own instance.

public abstract class TestBase {

[TearDown] public void ResetGlobalState() {

FeatureFlags.Enabled.Clear();

}

}

public class FeatureFlagTests : TestBase {

[Test] public void NewBilling() { ... }

}

With Mergify. Test Insights catches the cross-test signature: a test only fails after a specific other test has run, with assertions about a value the failing test never set. The dashboard surfaces the predecessor so the leaking static is the obvious lead.

Pattern 3

SetUpFixture lifecycle surprises

Symptom. A `[SetUpFixture]` initializes a database container; a test failure leaves the container running because `[OneTimeTearDown]` did not fire.

Root cause. [SetUpFixture] runs once per namespace before any test in that namespace, with paired [OneTimeSetUp] and [OneTimeTearDown] hooks. Teardown only fires on graceful shutdown; if the test runner crashes, the host process is killed, or a previous teardown threw, external resources stay open. CI runs that container forever until the next pipeline kills it, and meanwhile the next run hits "port already allocated".

[SetUpFixture]

public class GlobalFixtures {

private static IContainer _postgres;

[OneTimeSetUp]

public async Task Setup() {

_postgres = new PostgreSqlBuilder().Build();

await _postgres.StartAsync();

}

[OneTimeTearDown]

public async Task TearDown() {

// never runs if the host process is killed mid-suite

await _postgres.DisposeAsync();

}

}

Fix. Wrap teardown in a defensive try/catch and register a synchronous AppDomain.ProcessExit handler that blocks on async disposal so cleanup is deterministic during shutdown. For containers, use Testcontainers' .WithCleanUp(true) so the Docker daemon GCs them even if your handler does not run.

[OneTimeSetUp]

public async Task Setup() {

_postgres = new PostgreSqlBuilder().WithCleanUp(true).Build();

await _postgres.StartAsync();

AppDomain.CurrentDomain.ProcessExit += OnProcessExit;

}

private static void OnProcessExit(object? sender, EventArgs e) {

if (_postgres is null) return;

try {

_postgres.DisposeAsync().AsTask().GetAwaiter().GetResult();

}

catch {

// best-effort during process shutdown

}

}

With Mergify. Test Insights notices that the first test of a CI run fails with `port already allocated` only when the previous run's `[OneTimeTearDown]` did not log its completion. The dashboard surfaces the cross-run signature so the missing cleanup is the obvious place to look.

Pattern 4

TestContext attribute leakage between tests

Symptom. A test that reads `TestContext.CurrentContext.Test.Properties["sessionId"]` passes alone and fails inside the suite, with the wrong session set by a sibling fixture.

Root cause. TestContext.CurrentContext.Test.Properties stores attributes per test, but TestContext.Out and TestContext.WriteLine share the test runner's writer. Tests that mutate context-level properties via reflection or rely on inherited [Property] attributes from a base class can see one another's values when fixture order changes.

[TestFixture]

public class CheckoutTests {

[Test, Property("sessionId", "sess-1")]

public void BuysFromUserOne() {

var id = TestContext.CurrentContext.Test.Properties.Get("sessionId");

// Test runner shares property bag during parallel runs;

// sibling test's property sometimes wins

AssertCheckout(id);

}

}

Fix. Pass session-like values through method arguments (TestCase data) or instance fields scoped to the fixture instance. Reserve TestContext.Properties for read-only metadata declared statically on the test.

public class CheckoutTests {

private string _sessionId;

[SetUp] public async Task SignIn() {

_sessionId = await Auth.LoginAsync("user");

}

[Test] public void BuysFromUserOne() {

AssertCheckout(_sessionId);

}

}

With Mergify. Test Insights catches the cross-test signature: a test only fails after a specific other test has run, with assertions about a value the failing test never set. The dashboard surfaces the predecessor so the misused TestContext is the obvious lead.

Pattern 5

Async tests that .Result-deadlock on the sync context

Symptom. A test that calls `someAsyncOperation.Result` passes locally and hangs in CI until the runner kills it as a timeout.

Root cause. Pre-.NET 5, the WPF and ASP.NET Classic synchronization contexts captured the calling thread and resumed continuations on it. Calling .Result on a Task blocks the calling thread; if the Task wants to resume on that thread to finish, it deadlocks. NUnit's runner does not always install a sync context, but tests that pull one in (UI tests, custom SynchronizationContext in [SetUp]) trigger the deadlock under load.

[Test]

public void GetsUserSynchronously() {

SynchronizationContext.SetSynchronizationContext(new TestSyncContext());

var user = userService.GetAsync(1).Result; // deadlocks

Assert.That(user.Name, Is.EqualTo("Rémy"));

}

Fix. Make the test method async Task and await the call. NUnit awaits async test methods natively. Avoid mixing sync waits with async code in tests.

[Test]

public async Task GetsUser() {

var user = await userService.GetAsync(1);

Assert.That(user.Name, Is.EqualTo("Rémy"));

}

With Mergify. Test Insights groups timeout failures by their cause. When a test consistently times out at exactly the runner's wall-clock limit and never produces output, the dashboard tags it as a deadlock candidate so the .Result call is the obvious place to look.

Pattern 6

DateTime.UtcNow without an injected IClock

Symptom. A test that asserts an event happened "within the last second" passes locally and fails on the slower CI runner with a timestamp 1.2 seconds old.

Root cause. Calling DateTime.UtcNow directly inside production code reads the system clock. A test that asserts on a freshly created timestamp races against scheduler jitter. Locally the assertion fires within a millisecond; on CI the same code runs after a longer pause and the assertion's tolerance window is too tight.

public class AuditLogger {

public void Log(string evt) {

store[evt] = DateTime.UtcNow; // direct clock read

}

}

[Test]

public void AuditTimestampIsRecent() {

auditLogger.Log("checkout");

var logged = store["checkout"];

Assert.That(logged, Is.EqualTo(DateTime.UtcNow).Within(TimeSpan.FromSeconds(1)));

// racy under CI scheduler jitter

}

Fix. Inject TimeProvider (built-in since .NET 8) into the production class so tests can swap in a fixed clock. Production wires TimeProvider.System; tests use FakeTimeProvider from the Microsoft.Extensions.TimeProvider.Testing package, or a small custom subclass of TimeProvider when adding the dependency is overkill.

public class AuditLogger {

private readonly TimeProvider _clock;

public AuditLogger(TimeProvider clock) { _clock = clock; }

public void Log(string evt) {

store[evt] = _clock.GetUtcNow();

}

}

[Test]

public void AuditTimestamp() {

var fakeTime = new FakeTimeProvider(DateTimeOffset.Parse("2026-01-01Z"));

var logger = new AuditLogger(fakeTime);

logger.Log("checkout");

Assert.That(store["checkout"], Is.EqualTo(fakeTime.GetUtcNow()));

}

With Mergify. Test Insights links timing failures to their CI runner type. When a test only fails on the slower runner pool and never on the laptop pool, the dashboard surfaces the resource sensitivity so the real-clock dependency is the obvious place to look.

Pattern 7

HttpClient instances shared without per-test handlers

Symptom. A test that mocks an HTTP response passes alone and fails inside the suite when a sibling test sees the cached mock response from the previous test.

Root cause. HttpClient is meant to be reused across an application's lifetime: instance-per-test creates socket exhaustion. A common pattern is to register a single HttpClient in DI with a custom DelegatingHandler. Tests that swap the handler to a mock without resetting after leak the mock into the next test.

public class HttpClientTests {

private static readonly MockHandler Handler = new();

private static readonly HttpClient Shared = new(Handler);

[Test] public async Task UsesMockResponse() {

Handler.EnqueueResponse("{}");

var resp = await Shared.GetAsync("https://api.test/me");

Assert.That(await resp.Content.ReadAsStringAsync(), Is.EqualTo("{}"));

}

[Test] public async Task ReturnsRealValue() {

// expects unmocked. actual: mock from previous test still set.

var resp = await Shared.GetAsync("https://api.test/me");

Assert.That(await resp.Content.ReadAsStringAsync(), Is.Not.EqualTo("{}"));

}

}

Fix. Build a fresh HttpClient with a per-test handler in [SetUp]. For shared infrastructure, use IHttpClientFactory with named clients so each test asks for its own.

public class HttpClientTests {

private MockHandler _handler;

private HttpClient _client;

[SetUp] public void SetUp() {

_handler = new MockHandler();

_client = new HttpClient(_handler);

}

[TearDown] public void TearDown() => _client.Dispose();

[Test] public async Task UsesMockResponse() {

_handler.EnqueueResponse("{}");

var resp = await _client.GetAsync("https://api.test/me");

Assert.That(await resp.Content.ReadAsStringAsync(), Is.EqualTo("{}"));

}

}

With Mergify. Test Insights groups failures whose only signature is unexpected HTTP responses or mismatched assertion strings into a per-suite bucket. The dashboard surfaces the test that first poisoned the shared client so the fix lands at the source.

Pattern 8

Retry attribute hiding real bugs

Symptom. Your suite is green. A user reports a bug that should have been caught by the test that ran three times yesterday before passing on attempt 3.

Root cause. [Retry(3)] reruns failing tests up to N times and reports the last result. A real race that loses on attempt 1 and wins on attempt 2 gets reported as PASSED. The bug is still there. The pipeline has decided not to look at it.

[Test, Retry(3)]

public async Task ChargesCard() {

// intermittent timing bug; passes 2 of 3 times

var result = await billingService.ChargeAsync(42);

Assert.That(result.Success, Is.True);

}

Fix. Do not retry at the framework level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information `[Retry]` throws away. Quarantine kicks in once the pattern is clear.