Pattern 1

AssemblyInitialize racing test discovery

Symptom. A test that depends on a database container started in `[AssemblyInitialize]` fails on the first run after the test runner discovered tests in a different order.

Root cause. [AssemblyInitialize] fires once per assembly, before any test in that assembly runs. It receives a TestContext tied to assembly initialization, not to a specific test, so any code that pulls per-test data from it (deployment items, test categories, properties from a particular test method) reads the wrong values or none at all. The hazard is the global initialization itself: ordering relative to [ClassInitialize], side effects on shared resources before tests start, and brittle assumptions about what the runner has set up by then.

[TestClass]

public class GlobalSetup {

[AssemblyInitialize]

public static async Task Init(TestContext ctx) {

// assumes the first test brings the right deployment items;

// breaks when discovery picks a different test first

var data = ctx.DeploymentDirectory + "/seed.json";

await Database.SeedAsync(File.ReadAllText(data));

}

}

Fix. Make [AssemblyInitialize] independent of which test runs first. Hardcode resource paths or load fixtures from a known absolute location. For per-test deployment items, use [DeploymentItem] on the test method itself and read inside [TestInitialize].

[TestClass]

public class GlobalSetup {

[AssemblyInitialize]

public static async Task Init(TestContext ctx) {

await Database.SeedAsync(EmbeddedResources.Read("seed.json"));

}

}

[TestClass]

public class CheckoutTests {

[DeploymentItem("Resources/checkout-fixtures.json")]

[TestMethod] public async Task PlacesOrder() { ... }

}

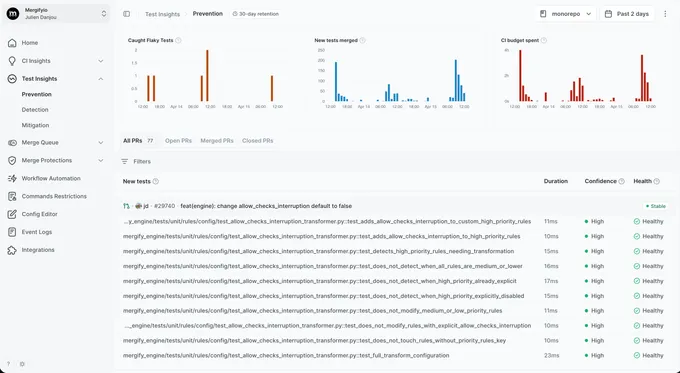

With Mergify. Test Insights tags failures whose only signature is `AssemblyInitialize threw an exception` and groups them by the file the failing test sits in. The dashboard surfaces the discovery-order coupling so the brittle assumption is the obvious lead.

Pattern 2

ClassInitialize ordering surprises across parallel classes

Symptom. Tests that depend on `[ClassInitialize]` setup pass alone and fail under `<Parallelize Scope="ClassLevel">` with assertions about state another class initialized.

Root cause. [ClassInitialize] fires once per class. With Scope="ClassLevel" in .runsettings, two classes can run their [ClassInitialize] hooks concurrently. If they both touch a shared static (a connection pool, a config dictionary, the Mock framework's global registry), they race.

[TestClass]

public class CheckoutTests {

[ClassInitialize]

public static void Init(TestContext _) {

Bootstrap.Configure(env: "checkout"); // mutates a static AppConfig

}

}

[TestClass]

public class InventoryTests {

[ClassInitialize]

public static void Init(TestContext _) {

Bootstrap.Configure(env: "inventory"); // races with CheckoutTests

}

}

// runsettings: <Parallelize><Scope>ClassLevel</Scope></Parallelize>

Fix. Avoid mutating shared static state in [ClassInitialize]. Refactor configuration into instance-per-test scope, or wrap the mutating section in lock with explicit ordering. For test classes that genuinely conflict, mark them [DoNotParallelize].

[TestClass]

[DoNotParallelize]

public class CheckoutTests {

[ClassInitialize]

public static void Init(TestContext _) {

Bootstrap.Configure(env: "checkout");

}

}

With Mergify. Test Insights tags failures that only appear with parallel runsettings as parallelism-sensitive. The dashboard surfaces the parallel-only signature so the [DoNotParallelize] decision is straightforward.

Pattern 3

DeploymentItem races on shared files

Symptom. A test that copies a fixture file via `[DeploymentItem]` passes alone and fails when run alongside another test that writes to the same path.

Root cause. [DeploymentItem] copies a file or directory into TestContext.DeploymentDirectory before the test runs. The deployment directory is shared across tests in the same run by default. If two parallel tests both write to DeploymentDirectory/output.json, they race; the loser sees the wrong content or a partially written file.

[TestClass]

public class ExporterTests {

[TestMethod]

[DeploymentItem("Fixtures/template.json")]

public void ExportsOrder() {

var output = Path.Combine(TestContext.DeploymentDirectory, "output.json");

File.WriteAllText(output, exporter.Render(order));

Assert.IsTrue(File.Exists(output));

}

[TestMethod]

[DeploymentItem("Fixtures/template.json")]

public void ExportsInvoice() {

var output = Path.Combine(TestContext.DeploymentDirectory, "output.json");

File.WriteAllText(output, exporter.Render(invoice));

// sometimes asserts on content from ExportsOrder under parallel

StringAssert.Contains(File.ReadAllText(output), "INV-");

}

}

Fix. Write to a per-test path derived from TestContext.TestName or Path.GetTempFileName(). Reserve DeploymentDirectory for read-only fixtures.

[TestMethod]

public void ExportsOrder() {

var output = Path.Combine(Path.GetTempPath(), $"{TestContext.TestName}.json");

File.WriteAllText(output, exporter.Render(order));

Assert.IsTrue(File.Exists(output));

}

With Mergify. Test Insights groups failures whose only signature is wrong file content or `IOException: file in use` into a per-suite bucket. The dashboard surfaces the test that first wrote to the shared path so the per-test path fix lands at the source.

Pattern 4

TestContext mutated across tests

Symptom. A test that reads `TestContext.Properties["tenantId"]` passes alone and fails inside the suite with a tenant set by a sibling.

Root cause. TestContext.Properties is a per-test bag, but tests that mutate it in [TestInitialize] via reflection or shared helpers can leak the mutation across tests when those helpers cache the context. Combined with parallel runs, two tests on the same thread see each other's properties.

[TestClass]

public class TenantTests {

public TestContext TestContext { get; set; }

[TestInitialize]

public void Setup() {

TestContext.Properties["tenantId"] = TenantHelper.PickRandom().Id;

}

[TestMethod] public void ProcessesOrder() {

var id = (string)TestContext.Properties["tenantId"];

// sometimes the wrong tenant under parallel runs

Assert.IsNotNull(orderService.For(id).Process());

}

}

Fix. Pass per-test values through instance fields, not TestContext.Properties. TestContext is meant for run metadata (output, deployment paths), not for state your tests need to read deterministically.

[TestClass]

public class TenantTests {

private string _tenantId;

[TestInitialize]

public void Setup() {

_tenantId = TenantHelper.PickRandom().Id;

}

[TestMethod] public void ProcessesOrder() {

Assert.IsNotNull(orderService.For(_tenantId).Process());

}

}

With Mergify. Test Insights catches the cross-test signature: a test only fails after a specific other test has run, with assertions about a value the failing test never set. The dashboard surfaces the predecessor so the misused TestContext is the obvious lead.

Pattern 5

Parallel execution settings interacting with shared state

Symptom. A test suite with `<MSTestV2><Parallelize><Workers>4</Workers></Parallelize></MSTestV2>` in `.runsettings` fails with assertions about a static counter another test incremented.

Root cause. MSTest v2's parallel scope (MethodLevel or ClassLevel) and worker count come from .runsettings. With MethodLevel, test methods can run concurrently, but MSTest still creates a separate test class instance per test method. The race is in shared process-wide state: static fields, registered services, the Mock framework's verifier, and any external resource the methods touch.

// .runsettings

<RunSettings>

<MSTest>

<Parallelize>

<Workers>4</Workers>

<Scope>MethodLevel</Scope>

</Parallelize>

</MSTest>

</RunSettings>

[TestClass]

public class CounterTests {

private static int _counter;

[TestInitialize] public void Reset() { _counter = 0; }

[TestMethod] public void IncrementsToOne() {

_counter++;

Assert.AreEqual(1, _counter); // sometimes 2 under parallel methods

}

}

Fix. Pick parallelism that matches the suite's isolation. Method-level requires every test to be a pure function of its inputs. For class-level (one thread per class), keep the static state but ensure each class owns it. For per-test isolation, prefer instance fields and local variables.

// .runsettings

<RunSettings>

<MSTest>

<Parallelize>

<Workers>4</Workers>

<Scope>ClassLevel</Scope>

</Parallelize>

</MSTest>

</RunSettings>

With Mergify. Test Insights tags failures that only appear with method-level parallelism and pass under class-level reruns as parallelism-sensitive. The dashboard surfaces the parallel-only signature so shared static or other process-wide mutable state is the obvious lead.

Pattern 6

Async test signatures missing the Task return

Symptom. An async test marked `[TestMethod] public async void` passes locally and reports false positives in CI (a test that should have failed reports as PASSED).

Root cause. MSTest awaits async test methods only when they return Task or ValueTask. An async void test method returns immediately; the test runner marks it PASSED before the async body completes. Any assertion failure inside the body is thrown on the synchronization context, where MSTest never sees it.

[TestMethod]

public async void ChargesUser() {

var ok = await billingService.ChargeAsync(42);

Assert.IsTrue(ok); // never observed; method returns void immediately

}

Fix. Always return Task from async test methods. Treat async void in test code as a bug; some MSTest versions warn but most pass it through.

[TestMethod]

public async Task ChargesUser() {

var ok = await billingService.ChargeAsync(42);

Assert.IsTrue(ok);

}

With Mergify. Test Insights catches false positives by comparing the test's reported duration to assertions logged. A test that reports PASSED with a sub-millisecond duration despite calling async code is the fingerprint, and the dashboard flags it as suspect-pass.

Pattern 7

DateTime.UtcNow without TimeProvider

Symptom. A test that asserts an event happened "within the last second" passes locally and fails on the slower CI runner with a timestamp 1.2 seconds old.

Root cause. Calling DateTime.UtcNow directly inside production code reads the system clock. A test that asserts on a freshly created timestamp races against scheduler jitter. Locally the assertion fires within a millisecond; on CI the same code runs after a longer pause and the assertion's tolerance window is too tight.

public class AuditLogger {

public void Log(string evt) => store[evt] = DateTime.UtcNow;

}

[TestMethod]

public void AuditTimestampIsRecent() {

auditLogger.Log("checkout");

var logged = store["checkout"];

Assert.IsTrue((DateTime.UtcNow - logged).TotalMilliseconds < 50);

// racy under CI scheduler jitter

}

Fix. Inject TimeProvider (built-in since .NET 8) into the production class so tests can swap in a fixed clock. Production wires TimeProvider.System; tests use FakeTimeProvider from the Microsoft.Extensions.TimeProvider.Testing package, or a small custom subclass when adding the dependency is overkill.

public class AuditLogger {

private readonly TimeProvider _clock;

public AuditLogger(TimeProvider clock) { _clock = clock; }

public void Log(string evt) => store[evt] = _clock.GetUtcNow();

}

[TestMethod]

public void AuditTimestamp() {

var fake = new FakeTimeProvider(DateTimeOffset.Parse("2026-01-01Z"));

var logger = new AuditLogger(fake);

logger.Log("checkout");

Assert.AreEqual(fake.GetUtcNow(), store["checkout"]);

}

With Mergify. Test Insights links timing failures to their CI runner type. When a test only fails on the slower runner pool and never on the laptop pool, the dashboard surfaces the resource sensitivity so the real-clock dependency is the obvious place to look.

Pattern 8

TestProperty rerun configuration hiding real bugs

Symptom. Your build is green. A user hits a bug that should have been caught by a test that ran four times yesterday before passing.

Root cause. MaxCpuCount + retry plugins for MSTest add a rerun-on-failure flag. A real race that loses on attempt 1 and wins on attempt 2 gets reported as PASSED. The bug is still there. The pipeline has decided not to look at it.

// .runsettings (please don't)

<RunSettings>

<RunConfiguration>

<TestSessionTimeout>3600000</TestSessionTimeout>

</RunConfiguration>

<MSTestRetry>

<RetryCount>3</RetryCount>

</MSTestRetry>

</RunSettings>

Fix. Do not retry at the framework level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information `MSTestRetry` configurations throw away. Quarantine kicks in once the pattern is clear.