Pattern 1

parallelize_workers races on shared state

Symptom. A test that mutates a class-level cache passes alone and fails after `parallelize(workers: :number_of_processors)` is enabled, with assertions seeing values from a sibling test.

Root cause. parallelize forks (or threads) the test runner. Anything those workers share inside Ruby (a class variable, a thread-local that leaks across the fiber boundary, a singleton instance) becomes a race. Forks are isolated, so most state is per-worker, but tests that hit the same external resource (a fixed Redis key, a shared file) collide just like they would in any parallel test framework.

class CounterTest < ActiveSupport::TestCase

parallelize(workers: 4)

@@count = 0

test "increments the counter" do

@@count += 1

assert_equal 1, @@count

end

test "increments again" do

@@count += 1

assert_equal 1, @@count # fails when sibling already incremented

end

end

Fix. Move shared state into per-test instance variables (set in setup) or scope external resources by name. For genuine global side effects, use parallelize_setup + parallelize_teardown hooks to give each worker its own database, Redis namespace, or temp directory.

class CounterTest < ActiveSupport::TestCase

parallelize(workers: 4)

setup { @count = 0 }

test "increments the counter" do

@count += 1

assert_equal 1, @count

end

end

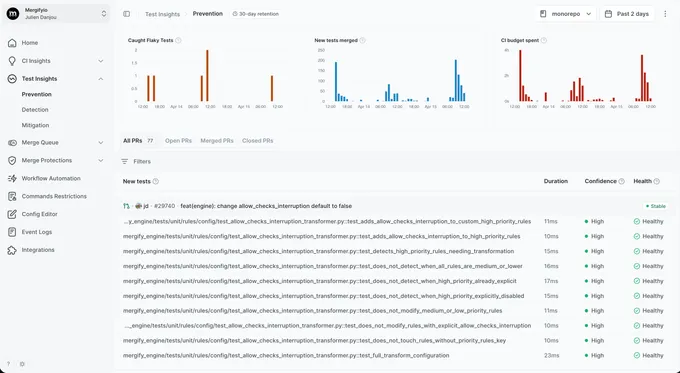

With Mergify. Test Insights tags failures that only appear under parallel runs as parallelism-sensitive and never under sequential reruns. The dashboard surfaces the parallel-only signature so you know to look for class-level state.

Pattern 2

i_suck_and_my_tests_are_order_dependent! masking real coupling

Symptom. A legacy test class is annotated with the order-dependent escape hatch and passes; removing the hatch reveals tests that depend on each other in non-obvious ways.

Root cause. Minitest randomizes test order to expose hidden coupling. i_suck_and_my_tests_are_order_dependent! turns the shuffle off for a class. The name is intentionally hostile because the only honest reason to use it is that the suite has a coupling bug you are not ready to fix. Leaving it in place hides the bug; the next refactor changes the implicit order and the test fails for what looks like an unrelated reason.

class LegacyOrderTest < ActiveSupport::TestCase

i_suck_and_my_tests_are_order_dependent!

test "1: creates a user" do

@@user = User.create!(name: "Rémy")

end

test "2: reads the user" do

# depends on test 1 having run first; class variable smuggles state

assert_equal "Rémy", @@user.name

end

end

Fix. Refactor to make every test independent: build state in setup or inside the test, drop the order-dependent annotation, and let the shuffle catch any remaining coupling.

class LegacyOrderTest < ActiveSupport::TestCase

setup do

@user = User.create!(name: "Rémy")

end

test "creates a user" do

assert @user.persisted?

end

test "reads the user" do

assert_equal "Rémy", @user.name

end

end

With Mergify. Test Insights records the seed for every run. When a class still depends on order after the annotation is removed, failures cluster around specific seed ranges; the dashboard surfaces them so the missed coupling is easy to find.

Pattern 3

Fixtures plus transactional tests in JS-driven specs

Symptom. A system test annotated with `js: true` (Capybara + Selenium/Cuprite) sees a half-empty database; the data the test created in a fixture or `setup` block is missing in the browser.

Root cause. Rails wraps every test in a database transaction (use_transactional_tests = true) and rolls back at the end. The transaction holds on the test process's connection, so any test that runs Rails code inside the test process sees the data. A Capybara JS driver opens a separate browser connection that cannot see uncommitted data from another connection. The browser logs in, the user does not exist on its connection, the test fails.

class CheckoutSystemTest < ApplicationSystemTestCase

driven_by :selenium, using: :headless_chrome

setup do

@user = User.create!(email: "user@example.com", password: "secret")

end

test "user signs in" do

visit "/login"

fill_in "Email", with: @user.email

# browser cannot see @user; sign-in fails with 'invalid credentials'

fill_in "Password", with: "secret"

click_button "Sign in"

assert_text "Welcome back"

end

end

Fix. Disable transactional tests for system specs and use a truncation-based cleaner, or use Rails 7.1+'s connection-shared transactions. The simplest path: switch the cleaning strategy via database_cleaner-active_record's truncation for JS specs.

# test/test_helper.rb

class ActionDispatch::SystemTestCase

self.use_transactional_tests = false

setup { DatabaseCleaner.strategy = :truncation; DatabaseCleaner.start }

teardown { DatabaseCleaner.clean }

end

With Mergify. Test Insights catches the signature: a system test fails consistently with empty-database errors and passes when re-run with the rest of the suite cleared (truncation from the previous run masks the issue). The dashboard surfaces the affected test type by tag.

Pattern 4

ActiveSupport::TestCase singleton leakage

Symptom. A test that stubs `Rails.application.config.feature_x` passes alone and breaks an unrelated test in another file with a value the second test never set.

Root cause. Rails configuration objects are global singletons. A test that mutates Rails.application.config.x or stubs a method on a class without unstubbing leaves that change in place for every later test in the worker. Class reopening, monkey patching, and constant reassignment all live for the life of the process.

class FeatureFlagTest < ActiveSupport::TestCase

test "with the new path" do

Rails.application.config.use_new_billing = true

assert_equal 49, Pricing.for(:pro)

end

# no teardown; config stays set

end

class LegacyBillingTest < ActiveSupport::TestCase

test "with the legacy path" do

# expects use_new_billing == false (default). actual: true, leaked.

assert_equal 99, Pricing.for(:pro)

end

end

Fix. Use a temporary stub helper that auto-restores: stub_const from activesupport's testing helpers, or a per-test reset in teardown. For configuration, capture the original value and restore it explicitly.

class FeatureFlagTest < ActiveSupport::TestCase

setup do

@original = Rails.application.config.use_new_billing

Rails.application.config.use_new_billing = true

end

teardown do

Rails.application.config.use_new_billing = @original

end

test "with the new path" do

assert_equal 49, Pricing.for(:pro)

end

end

With Mergify. Test Insights groups the downstream failures by the test they all follow. When five seemingly unrelated tests fail only after a config-mutating test runs first, the dashboard surfaces the upstream culprit instead of pointing at five red herrings.

Pattern 5

Time.current without travel_back

Symptom. A test that uses `travel_to` passes, and the next test that touches `Time.current` fails with a date months in the past or future.

Root cause. travel_to moves the clock for every later Time.current call in the worker. Calling travel_to inside a test without a paired travel_back (or without using the block form) leaves the clock frozen for every test that runs after on the same worker.

class InvitationTest < ActiveSupport::TestCase

test "expires after 7 days" do

travel_to Time.zone.parse("2026-01-01")

invite = Invitation.create!

travel 8.days

assert invite.expired?

# missing travel_back

end

end

class SessionTest < ActiveSupport::TestCase

test "session token is valid for an hour" do

token = SessionToken.new(@user)

# Time.current is still January 9 2026; assertion fails

assert_in_delta 1.hour.from_now, token.expires_at, 1.minute

end

end

Fix. Use the block form of travel_to so the clock auto-restores at the end of the block. Or include a global teardown { travel_back } in the base test case.

# test/test_helper.rb

class ActiveSupport::TestCase

teardown { travel_back } # belt-and-braces

end

# in the test

test "expires after 7 days" do

travel_to Time.zone.parse("2026-01-01") do

invite = Invitation.create!

travel 8.days

assert invite.expired?

end

end

With Mergify. Test Insights shows the cross-test time signature: a test fails only when run after a known time-mutating test, and only when its assertions touch the clock. The dashboard surfaces the ordering so the missed travel_back is easy to locate.

Pattern 6

Mocha mocks that forgot to verify

Symptom. A test asserts a mock was called, the assertion passes, but a sibling test fails on a stubbed method that was never declared in its own scope.

Root cause. Mocha stubs methods globally on the receiver instance or class. Without mocha/minitest's automatic teardown wiring, a stub set in test A persists into test B. Even with the wiring, calling stubs(:method) on a class object outside an Object#expects block leaks if the test exits early.

class BillingTest < ActiveSupport::TestCase

test "charges the user" do

StripeClient.stubs(:charge).returns(true)

assert Billing.charge_user(42)

# exits without unstub; StripeClient.charge stays mocked

end

test "falls back when stripe is down" do

# StripeClient.charge is still the stub returning true

assert Billing.fallback_for(42)

end

end

Fix. Require mocha/minitest in your test helper so stubs auto-revert. Prefer expects over stubs when you actually want to verify the call: failed expectations fail the test loudly.

# test/test_helper.rb

require "mocha/minitest"

# in the test

test "charges the user" do

StripeClient.expects(:charge).with(42).returns(true)

assert Billing.charge_user(42)

# mocha verifies and unstubs at end of test

end

With Mergify. Test Insights catches the cross-test signature: a test only fails when run after a specific other test, and only when its assertion involves a stubbed method. The dashboard tags the dependency so you know it's mock leakage, not a real regression.

Pattern 7

setup/teardown skipped on early errors

Symptom. A test class that opens a database connection in `setup` leaves orphan connections after a `setup` block raises, and the next CI run starts with a connection-pool warning.

Root cause. Minitest's teardown runs even when the test body fails, but it does not run when setup itself raises (Minitest never reaches teardown if setup blew up). External resources opened in setup before the failing line stay open. Minitest::Test#after_teardown has the same constraint.

class APIClientTest < ActiveSupport::TestCase

setup do

@conn = open_connection_pool

@client = APIClient.new(maybe_nil: nil) # raises NoMethodError

end

teardown do

@conn.close # never runs; @conn leaks

end

end

Fix. Wrap risky construction in Ruby's begin/ensure so the connection is always closed before the test exits, even when setup raises. The classic ActiveSupport around-style helper does the same thing implicitly with yield.

class APIClientTest < ActiveSupport::TestCase

setup do

@conn = open_connection_pool

begin

@client = APIClient.new(maybe_nil: nil)

rescue

@conn.close # ensure the resource is freed even when init raises

raise

end

end

teardown do

@conn.close if @conn && !@conn.closed?

end

end

With Mergify. Test Insights groups failures whose only signature is connection-pool exhaustion or 'too many open files' into an fd-leak bucket and surfaces the test that first triggered the leak, rather than the random victim that ran when the JVM ran out.

Pattern 8

Shell-level minitest reruns hiding real bugs

Symptom. Your CI runs `bundle exec rails test || bundle exec rails test` and the build is green. A user hits a bug your tests should have caught.

Root cause. Minitest does not ship with a retry mechanism, so teams reach for shell-level retries: rerun the failing test with the same seed, or rerun the whole suite if anything failed. A real race that loses on attempt 1 and wins on attempt 2 gets reported as green. The bug is still there. The pipeline has decided not to look at it.

# CI script (please don't)

bundle exec rails test || bundle exec rails test || exit 1

Fix. Do not retry at the shell level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead. That keeps the signal visible without blocking the merge queue.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information shell-level retries throw away. Quarantine kicks in once the pattern is clear.