Pattern 1

t.Parallel() racing on shared package state

Symptom. A test that has been green for months starts failing intermittently after another test in the same package adopts t.Parallel(), and the failure mentions a value the failing test never set.

Root cause. Calling t.Parallel() tells go test to run that test alongside the next parallel test in the same package. Anything they share (a package-level var, a singleton client, a shared temp file path, an environment variable read at init) becomes a race. Tests that worked sequentially fail under parallelism without any code change.

var lastUserID string // package-level state

func TestCreateUser(t *testing.T) {

t.Parallel()

lastUserID = createUser(t).ID

if lastUserID == "" {

t.Fatal("expected a user ID")

}

}

func TestNotifyLastUser(t *testing.T) {

t.Parallel()

// races with TestCreateUser; sometimes "" sometimes a real ID

notify(lastUserID)

}

Fix. Treat package-level mutable state as a smell in tested packages. Pass values through *testing.T helpers or local variables so each parallel test owns what it touches. For unavoidable shared external state (a database, a temp directory), give each parallel test its own slice via t.TempDir(), a row scoped by t.Name(), or a transactional fixture.

func TestCreateUser(t *testing.T) {

t.Parallel()

id := createUser(t).ID

if id == "" {

t.Fatal("expected a user ID")

}

}

func TestNotifyUser(t *testing.T) {

t.Parallel()

id := createUser(t).ID

notify(id)

}

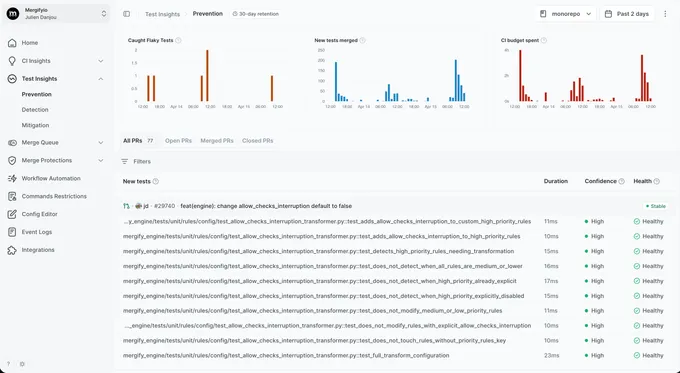

With Mergify. Test Insights catches the parallel-only signature: a test only fails when run with -parallel > 1 and passes under -parallel=1 reruns. The dashboard tags it as parallelism-sensitive so the shared-state root cause is the obvious lead.

Pattern 2

Goroutine leaks across test boundaries

Symptom. A test passes, the next test fails with `connection refused` or a panic from a goroutine that the failing test never started.

Root cause. Tests start goroutines (a worker, a server, a poller) and finish before those goroutines do. The runtime keeps them running into the next test, where they continue to push to a channel, write to a file, or hit a network endpoint that the new test owns. The new test sees behavior it cannot explain.

func TestPollMetrics(t *testing.T) {

go func() {

for {

time.Sleep(10 * time.Millisecond)

metrics.Push() // still running after t returns

}

}()

// no cleanup

}

func TestMetricsAreEmpty(t *testing.T) {

if got := metrics.Len(); got != 0 {

t.Fatalf("expected empty, got %d", got) // sometimes fails

}

}

Fix. Use a context.WithCancel tied to t.Cleanup so every goroutine the test starts is cancelled when the test ends. Add go.uber.org/goleak in TestMain to fail loudly when a leak ships.

func TestPollMetrics(t *testing.T) {

ctx, cancel := context.WithCancel(context.Background())

t.Cleanup(cancel)

go func() {

ticker := time.NewTicker(10 * time.Millisecond)

defer ticker.Stop()

for {

select {

case <-ctx.Done():

return

case <-ticker.C:

metrics.Push()

}

}

}()

}

With Mergify. Test Insights surfaces the cross-test signature: test B fails consistently when run after test A and never alone. The dashboard groups failures by their predecessor so the leaking-goroutine pattern is easy to find.

Pattern 3

Map iteration order assumptions

Symptom. A test passes hundreds of times locally and fails once a week on CI with an assertion against the first element of a slice built from a map.

Root cause. Go intentionally randomizes map iteration order to discourage code that depends on it. A test that iterates a map and asserts on the first value implicitly assumes an order Go does not promise. The randomization is per-process, so the test can pass on every run for months until the seed lands on the unhappy ordering.

func TestNamesContainsAlice(t *testing.T) {

users := map[string]int{"alice": 1, "bob": 2, "carol": 3}

first := ""

for name := range users {

first = name

break

}

if first != "alice" {

t.Fatalf("expected alice, got %q", first) // fails ~66% of the time

}

}

Fix. Sort before asserting. Build a slice from the map's keys (or values), sort.Strings it, then compare. For "the map contains X" tests, prefer an explicit lookup over iterating.

func TestNamesContainsAlice(t *testing.T) {

users := map[string]int{"alice": 1, "bob": 2, "carol": 3}

if _, ok := users["alice"]; !ok {

t.Fatal("expected alice in users")

}

}

// or, when order matters, sort:

func TestNamesSorted(t *testing.T) {

users := map[string]int{"alice": 1, "bob": 2, "carol": 3}

names := slices.Sorted(maps.Keys(users))

if !slices.Equal(names, []string{"alice", "bob", "carol"}) {

t.Fatalf("got %v", names)

}

}

With Mergify. Test Insights tracks pass rates per test on the default branch. A test that fails once every few hundred runs with a string-comparison error against a known map iteration is the textbook signature; the dashboard surfaces it under map-iteration confidence drops.

Pattern 4

Time-based assertions without testing/synctest

Symptom. A test that asserts something happens "after 100ms" passes locally and fails on the slow CI runner with a tick that arrived a millisecond late.

Root cause. time.Sleep and time.NewTimer use the real clock. A test that sleeps 100ms and asserts a side effect happened is racing the scheduler. Locally the spare CPU finishes in 102ms; on CI the throttled runner finishes in 105ms and the assertion fires before the side effect lands.

func TestRefreshFiresEvery100ms(t *testing.T) {

count := 0

stop := startRefresher(func() { count++ }, 100*time.Millisecond)

defer stop()

time.Sleep(250 * time.Millisecond)

if count < 2 {

t.Fatalf("expected at least 2 ticks, got %d", count) // sometimes 1 on CI

}

}

Fix. Use testing/synctest (Go 1.24+) to virtualize time inside the test: time advances when every goroutine in the bubble is blocked on something time-dependent, so a 100ms sleep is instant and exact. For older Go, inject a Clock interface and substitute a fake.

func TestRefreshFiresEvery100ms(t *testing.T) {

synctest.Run(func() {

count := 0

stop := startRefresher(func() { count++ }, 100*time.Millisecond)

defer stop()

time.Sleep(250 * time.Millisecond) // virtual time, deterministic

if count != 2 {

t.Fatalf("expected exactly 2 ticks, got %d", count)

}

})

}

With Mergify. Test Insights links the failure to its CI runner type. When a timing test only fails on the slower runner pool and never on the laptop pool, the dashboard surfaces the resource sensitivity so the real-clock dependency is the obvious culprit.

Pattern 5

httptest.Server connection leaks

Symptom. A long-running test suite eventually fails with `dial tcp: too many open files` or a flaky test panics with `address already in use`.

Root cause. httptest.NewServer binds a port and starts a goroutine. Forgetting to call srv.Close() leaks both. After enough tests, the worker hits the file-descriptor limit. The first test to run after that point fails with an unrelated socket error.

func TestUploadHandler(t *testing.T) {

srv := httptest.NewServer(uploadHandler())

// no defer srv.Close()

resp, _ := http.Post(srv.URL, "application/octet-stream", strings.NewReader("hi"))

if resp.StatusCode != 200 {

t.Fatalf("got %d", resp.StatusCode)

}

}

Fix. Always defer srv.Close(), or register it with t.Cleanup so the cleanup survives early returns. Pair with resp.Body.Close() for every HTTP response to release the underlying connection.

func TestUploadHandler(t *testing.T) {

srv := httptest.NewServer(uploadHandler())

t.Cleanup(srv.Close)

resp, err := http.Post(srv.URL, "application/octet-stream", strings.NewReader("hi"))

if err != nil {

t.Fatal(err)

}

defer resp.Body.Close()

if resp.StatusCode != 200 {

t.Fatalf("got %d", resp.StatusCode)

}

}

With Mergify. Test Insights groups failures whose only signature is `too many open files` or `address already in use` into a single fd-exhaustion bucket. The dashboard surfaces the test that first triggered the leak rather than the random victim that ran when fds ran out.

Pattern 6

TestMain teardown bypassed by os.Exit

Symptom. A test suite that uses TestMain to spin up a database container leaves the container running after a failure, and the next CI run fails with `port already allocated`.

Root cause. TestMain patterns that defer teardown and then call os.Exit(code) never run the deferred function: os.Exit terminates the process without unwinding the stack. The same trap shows up for code under test that calls log.Fatal or os.Exit directly, or for a goroutine that panics outside any test function and crashes the whole process before m.Run() returns.

func TestMain(m *testing.M) {

pool := startTestDB()

defer pool.Close() // SKIPPED: os.Exit never runs deferred funcs

os.Exit(m.Run())

}

Fix. Move os.Exit into a wrapper that returns the exit code from a function whose deferred teardown runs first. Inside test bodies, replace log.Fatal with t.Fatal so the runner can clean up; for goroutines started in tests, recover and report through t.Errorf instead of letting the panic crash the process.

func TestMain(m *testing.M) {

os.Exit(run(m))

}

func run(m *testing.M) int {

pool := startTestDB()

defer pool.Close() // runs before os.Exit

return m.Run()

}

With Mergify. Test Insights notices that the first test of a CI run fails for the same `port already allocated` reason and the failure correlates with a panic on the previous run. The dashboard surfaces the cross-run signature so the missing teardown is easy to spot.

Pattern 7

Subtest plus t.Parallel interleaving surprises

Symptom. A table-driven test with `t.Parallel()` inside the subtest passes locally and fails on CI with subtests sharing the same loop-variable state.

Root cause. t.Run with t.Parallel() inside delays the subtest until the parent finishes its body, then runs all parallel subtests together. Pre-Go 1.22 this combined with loop variable capture to give every subtest the same value of the loop variable. Go 1.22+ fixed the loop-var semantics but the parent-finishes-first ordering still surprises people who assume subtests run in declaration order.

func TestPricing(t *testing.T) {

cases := []struct{ plan string; want int }{

{"free", 0}, {"pro", 99}, {"enterprise", 999},

}

for _, tc := range cases {

t.Run(tc.plan, func(t *testing.T) {

t.Parallel()

got := priceFor(tc.plan)

if got != tc.want {

// pre-Go 1.22: tc was the last loop value for every subtest

t.Fatalf("plan %q: want %d, got %d", tc.plan, tc.want, got)

}

})

}

}

Fix. Stay on Go 1.22 or newer where loop variables are scoped per iteration. If you cannot upgrade, capture the loop variable explicitly (tc := tc) before the subtest body. For tests that need to share setup across subtests, run setup in the parent and pass it explicitly through closure.

func TestPricing(t *testing.T) {

cases := []struct{ plan string; want int }{

{"free", 0}, {"pro", 99}, {"enterprise", 999},

}

for _, tc := range cases {

// Go 1.22+: tc is per-iteration, no manual shadow needed

t.Run(tc.plan, func(t *testing.T) {

t.Parallel()

if got := priceFor(tc.plan); got != tc.want {

t.Fatalf("plan %q: want %d, got %d", tc.plan, tc.want, got)

}

})

}

}

With Mergify. Test Insights groups failing subtests by their parent. When every subtest of TestPricing fails with the same wrong value, the dashboard surfaces the loop-variable signature so the upgrade or shadow fix lands once.

Pattern 8

CI retries hiding flaky Go test failures

Symptom. Your first CI run fails, a retry passes, and the pipeline reports green. A user still hits the race in production.

Root cause. go test -count=N reruns tests N times and exits non-zero if any run fails, so it tends to expose flakes rather than mask them. The masking happens when CI or shell scripts retry the whole suite and only keep the final result. That turns "failed once, passed later" into "green" even though the underlying race or order dependency is still there. Cached results are a separate concern; use -count=1 when you need a fresh run.

# CI script (please don't)

go test ./... || go test ./... || go test ./...

Fix. Do not hide failures behind blind suite retries. Let the first failing attempt stay visible, then fix the flaky test or quarantine it explicitly while you work on the root cause. If you need a fresh run instead of cached results, use go test -count=1 without treating later retries as success.

With Mergify. Test Insights reruns at the CI level with attempt-by-attempt result tracking. You can see that a test failed on attempt 1 and passed on attempt 2, which is exactly the signal that naive shell retries would hide. Quarantine kicks in once the pattern is clear.