Pattern 1

Symptom. A test passes alone and fails in the suite, with the failure pointing at a fixture that was supposed to be torn down already.

Root cause. Yield-style fixtures tear down in reverse order of setup, and module/session-scoped fixtures stay alive across many tests. If two fixtures touch the same external resource (a database, a tmp directory, a singleton), the teardown of one can pull state out from under tests still using the other. The next test inherits a half-cleaned world.

@pytest.fixture(scope="module")

def db_conn():

conn = connect_to_db()

yield conn

conn.close() # Closes here

@pytest.fixture

def user(db_conn):

u = db_conn.create_user()

yield u

db_conn.delete_user(u.id) # Runs AFTER db_conn.close in some orderings

Fix. Make the cleanup defensive: have inner fixtures check that outer resources are still live, or scope all related fixtures to the same lifecycle. For shared external state, prefer pytest-postgresql-style transactional fixtures that roll back rather than delete.

@pytest.fixture

def user(db_conn):

if db_conn.closed:

pytest.skip("db_conn already torn down")

u = db_conn.create_user()

yield u

if not db_conn.closed:

db_conn.delete_user(u.id)

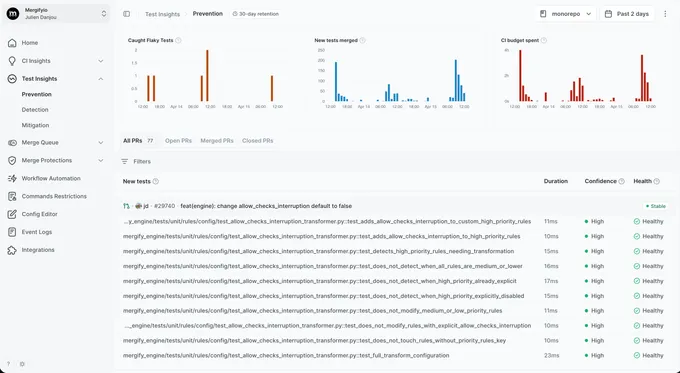

With Mergify. Test Insights reruns the suspect test in isolation. When the same SHA passes alone but fails in the suite, the test gets flagged as ordering-sensitive and quarantined while you fix the fixture lifecycle.

Pattern 2

Order-dependent specs under pytest-xdist

Symptom. Tests pass on a single worker, fail with `pytest -n auto`, and the failure shifts to a different test on each rerun.

Root cause. pytest-xdist distributes tests across worker processes. Anything those workers share (a tmp directory at a fixed path, a global counter in a module, a Redis key, an env var) becomes a race. Worse, the distribution algorithm depends on test count and worker count, so the failure looks non-deterministic when it is actually a clean function of which tests landed on which worker.

# tests use a shared tmp directory

WORKDIR = "/tmp/test-output"

def test_writes_report():

with open(f"{WORKDIR}/report.txt", "w") as f:

f.write("hello")

def test_reads_report():

# Under -n auto, this can run before test_writes_report on a sibling worker

assert os.path.exists(f"{WORKDIR}/report.txt")

Fix. Use the tmp_path fixture (per-test directory) or the tmp_path_factory fixture (per-session). For inter-worker shared state, use the worker_id fixture to namespace by worker.

def test_writes_report(tmp_path):

(tmp_path / "report.txt").write_text("hello")

def test_reads_report(tmp_path):

(tmp_path / "report.txt").write_text("hello")

assert (tmp_path / "report.txt").exists()

With Mergify. Test Insights groups failures by their xdist worker id. When a test only fails on one worker or only at certain test counts, the dashboard surfaces the parallelism dimension so the ordering dependency is obvious.

Pattern 3

Hypothesis seed non-determinism

Symptom. A property test passes on every commit for weeks, then fails once on CI with an example you cannot reproduce locally.

Root cause. Hypothesis generates a different example set each run unless you pin a seed. Most runs hit the same easy examples; occasionally Hypothesis explores deeper and finds a real edge case your code never handled. The test is not flaky, your code is, but without the seed the failure looks intermittent.

from hypothesis import given, strategies as st

@given(st.integers())

def test_round_trip(n):

assert from_str(to_str(n)) == n

# Passes 99 of 100 runs. The 100th finds n=-2**63 and fails.

Fix. Add the failing example to the test (Hypothesis prints the reproducer in the failure output) and pin the seed in CI when you need bisection. Treat any new Hypothesis failure as a real bug, not a flake.

from hypothesis import example, given, strategies as st

@given(st.integers())

@example(-(2**63)) # the case Hypothesis found

def test_round_trip(n):

assert from_str(to_str(n)) == n

With Mergify. Test Insights treats Hypothesis failures as real bugs by default (one failure on the default branch is enough to trigger). Quarantine is reserved for tests where the cost of fixing exceeds the cost of the noise, and the dashboard surfaces the failed example for triage.

Pattern 4

Autouse fixture surprises

Symptom. A test that does not request any fixture suddenly fails after an unrelated PR adds a new fixture file in conftest.py.

Root cause. Autouse fixtures run for every test in their scope without being requested. That is the whole point, but it means a fixture added in a parent conftest.py runs across the entire subtree below it. If the autouse fixture mutates global state (a config dict, a feature flag, an env var) and assumes it owns the world, tests that never asked for it can now see modified state.

# conftest.py at the repo root

@pytest.fixture(autouse=True)

def reset_feature_flags():

flags["NEW_BILLING"] = True

yield

flags["NEW_BILLING"] = False

# tests/test_legacy.py: never requested the fixture

def test_legacy_billing_path():

# Used to assert flags["NEW_BILLING"] is False. Now it's True for one tick.

assert legacy_charge() == 100

Fix. Constrain the autouse fixture's scope by moving it to a more specific conftest.py, or convert it to a non-autouse fixture and request it explicitly where needed. pytest --fixtures shows which autouse fixtures apply to a given test.

With Mergify. Test Insights notices that the failure's appearance correlates with a specific commit. The dashboard links the failing test to the conftest.py change, so the autouse-fixture blast radius is visible at PR time.

Pattern 5

Symptom. A test passes in isolation, fails when run after a specific other test, and the failure mentions a function returning a value it should not.

Root cause. monkeypatch reverts at the end of the test that requested it, but unittest.mock.patch as a context manager only reverts on exit. Mix the two, throw an exception inside the context, and the patch can stick. patch as a decorator + skipped teardown also leaves stubs behind.

import unittest.mock

def test_charge_uses_stripe():

p = unittest.mock.patch("billing.stripe_client.charge", return_value=True)

p.start() # forgot to p.stop(); patch leaks into next test

assert charge_user(42) is True

def test_charge_falls_back():

# billing.stripe_client.charge is still mocked from the previous test

assert charge_user(42) is True

Fix. Prefer pytest's monkeypatch fixture (auto-reverts) for env vars, attribute swaps, and module-level patches. For unittest.mock.patch, always use it as a context manager or decorator so the patch reverts on test exit.

def test_charge_uses_stripe(monkeypatch):

monkeypatch.setattr("billing.stripe_client.charge", lambda _: True)

assert charge_user(42) is True

# Reverts automatically at test end

With Mergify. Test Insights catches the cross-test signature: failure only happens when the suspect test runs after a specific other test. The dashboard tags it as ordering-dependent so you know the failure is leakage, not a real regression.

Pattern 6

Async event-loop scope mismatch

Symptom. An async test fails with `RuntimeError: Event loop is closed` or `attached to a different loop` after upgrading pytest-asyncio.

Root cause. pytest-asyncio creates a new event loop per test by default in modern versions. Fixtures that hold connections (HTTP clients, database pools, websockets) bind to the loop they were created on. If a session-scoped fixture creates a connection on one loop and a test runs on a different loop, the connection is now attached to a closed loop. The first test passes; the next blows up.

@pytest.fixture(scope="session")

async def http_client():

async with httpx.AsyncClient() as c:

yield c # bound to whatever loop ran first

@pytest.mark.asyncio

async def test_one(http_client):

await http_client.get("/") # passes

@pytest.mark.asyncio

async def test_two(http_client):

await http_client.get("/") # RuntimeError: attached to a different loop

Fix. Match fixture scope to event-loop scope. Either set asyncio_default_fixture_loop_scope = "session" in pytest.ini and accept the broader sharing, or scope the fixture to "function" so each test gets a fresh client on its own loop.

# pytest.ini

[pytest]

asyncio_mode = auto

asyncio_default_fixture_loop_scope = session

# conftest.py

@pytest.fixture(scope="session")

async def http_client():

async with httpx.AsyncClient() as c:

yield c

With Mergify. Test Insights reports the failure with its full async stack trace. When the loop-mismatch error appears in the dashboard alongside a recent dependency bump, the connection between the upgrade and the failure is hard to miss.

Pattern 7

Symptom. Test collection itself fails or hangs under `pytest -n auto`, with errors that mention database connections, missing env vars, or file locks.

Root cause. Pytest collects tests by importing every test module. If a module reads a database connection at import time, opens a file, or fires off a background thread, that side effect runs once per worker on every test session. Two workers race to acquire the same lock; an env var that exists locally is missing in the CI image; an HTTP call at import time times out.

# tests/test_pricing.py

from app.pricing import db # import-time DB connection

DB_USER = db.query("SELECT current_user").scalar() # runs at import

def test_pricing_lookup():

assert pricing_for("pro") == 99

Fix. Push side effects into fixtures, where they only run for tests that request them. The module body should be free of I/O and network calls.

# tests/test_pricing.py

@pytest.fixture

def db_user(db_conn):

return db_conn.query("SELECT current_user").scalar()

def test_pricing_lookup(db_user):

assert pricing_for("pro") == 99

With Mergify. Collection-time failures show up in Test Insights as a session-level error rather than a per-test flake. The dashboard groups these distinctly so they do not pollute per-test confidence scores.

Pattern 8

pytest-rerunfailures hiding real bugs

Symptom. Your CI pipeline is green. A user reports a bug that your tests should have caught.

Root cause. pytest-rerunfailures with --reruns 3 retries failing tests up to three times and reports the last result. A real bug that fails on attempt 1 because of a race it usually wins gets reported as green when attempts 2 and 3 happen to win the race. The bug is still there. Your suite has decided not to look at it.

# pytest.ini (please don't)

[pytest]

addopts = --reruns 3 --reruns-delay 1

Fix. Do not retry at the pytest level. When a test is genuinely flaky, fix it. When the fix takes longer than a session, quarantine it instead, which keeps the signal visible without blocking merges.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You can see that a test passed on attempt 2 of 3, which is exactly the information rerunfailures discards. Quarantine kicks in once the pattern is clear, not silently after every flake.