Pattern 1

Symptom. A test passes in isolation, fails when the suite runs end to end, and the failure looks like a completely unrelated assertion timeout.

Root cause. Calling jest.useFakeTimers() mutates a module-scoped timer mock that survives the test unless you explicitly restore it. setTimeout/setInterval callbacks queued under fake timers can fire under the next test's real timers, and vice versa. The next test sees phantom assertion runs, mock calls it did not make, or promise states that make no sense.

test("fake-timer leakage", () => {

jest.useFakeTimers();

setTimeout(() => fetchUser(), 5000);

// no cleanup. fake timers leak into the next test

});

test("getUser returns the logged-in user", async () => {

const user = await getUser();

expect(user).toBeDefined(); // fetchUser from test 1 fires here

});

Fix. Pair every useFakeTimers() with useRealTimers() in afterEach, and clear pending timers before the switch. If you use fake timers everywhere, move it into a global setup and stop flipping per-test.

afterEach(() => {

jest.clearAllTimers();

jest.useRealTimers();

});

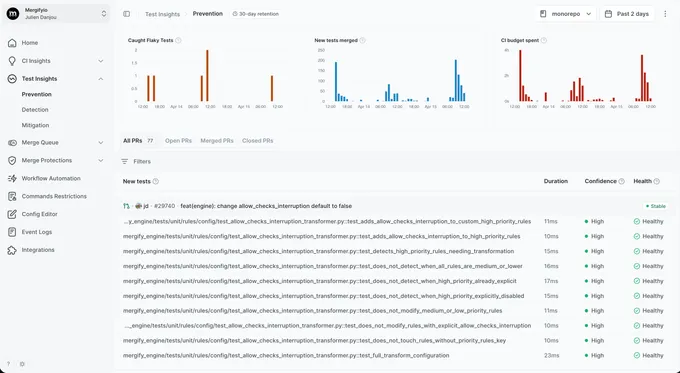

With Mergify. Test Insights reruns the suspect test on a dedicated worker. When the same SHA produces different results across runs, the test is flagged; when two of the three runs agree, the dissenter is quarantined so the merge queue keeps moving.

Pattern 2

Snapshot drift on shared state

Symptom. A snapshot test fails every few days with a diff you did not cause: a timestamp shifted, a UUID changed, a list reordered.

Root cause. toMatchSnapshot() serializes whatever you hand it. If the rendered output includes Date.now(), a crypto UUID, a Map iterated in insertion order, or a test-id from your component library, the snapshot captures today's value and tomorrow's run disagrees. The test has not changed. The world has.

test("renders invoice", () => {

// Invoice renders 'Generated: {Date.now()}' and a random receipt UUID

const tree = renderer.create(<Invoice />).toJSON();

expect(tree).toMatchSnapshot();

});

Fix. Freeze the sources of non-determinism with a custom serializer or a jest.setSystemTime() stub. For UUIDs, mock the generator. For anything truly variable, assert on structure with property matchers rather than a full snapshot.

expect.addSnapshotSerializer({

test: (v) => typeof v === "string" && UUID_RE.test(v),

print: () => '"<uuid>"',

});

beforeAll(() => {

jest.useFakeTimers().setSystemTime(new Date("2026-01-01"));

});

With Mergify. Test Insights groups the repeated diffs under a single flaky-test confidence score so you see the pattern at once: same test, same file, three drifting snapshots in a week. Quarantine until you add the serializer.

Pattern 3

Module-mock hoisting traps

Symptom. A mock you thought you scoped to one file starts affecting a later file in the same worker, or your imported module is undefined on the second run.

Root cause. jest.mock('./db') is hoisted above imports at transform time. That works great when the factory is pure, but if the factory captures a closure variable or your mock caches state, the second test file that imports ./db gets the cached mock, not a fresh one. Jest workers reuse module caches across files by default.

// auth.test.ts

jest.mock("./db", () => ({ query: jest.fn().mockResolvedValue([]) }));

import { login } from "./auth";

test("login calls db.query", async () => { /* ... */ });

// billing.test.ts, same worker

import { charge } from "./billing";

test("charge hits the db", async () => {

await charge();

// expected: real db.query. actual: the mock from auth.test.ts

});

Fix. Reset modules between files with jest.resetModules(), or set resetMocks: true in your config so every test starts clean. If a mock is truly global, put it in __mocks__ where the behavior is explicit.

// jest.config.js

module.exports = {

resetMocks: true,

resetModules: true,

};

With Mergify. Test Insights detects the cross-file signature: a test that only fails when run after a specific other file. The dashboard surfaces the ordering dependency so you know where to look.

Pattern 4

test.concurrent state sharing

Symptom. A suite written with <code class="bg-gray-100 px-1 rounded">test.concurrent</code> fails intermittently with off-by-one counters, wrong user records, or assertions that see state they should not.

Root cause. test.concurrent runs tests in parallel inside a single file using Jest's promise scheduler. Any module-level variable they touch is shared. beforeEach reset hooks do not fire between concurrent tests. They fire before each of them, in order, which is not the same thing.

let counter = 0;

beforeEach(() => {

counter = 0;

});

test.concurrent("increments to 1", async () => {

counter++;

expect(counter).toBe(1); // passes in isolation

});

test.concurrent("increments to 1 again", async () => {

counter++;

expect(counter).toBe(1); // fails ~50% of the time

});

Fix. Only use test.concurrent for tests that are pure functions of their inputs. If a test reaches for a shared mock, a database, or a module singleton, demote it to a regular test.

With Mergify. Test Insights surfaces concurrent-only failures distinctly: the test fails only when its file runs with --maxConcurrency > 1, and passes in sequential reruns. That signature is the fingerprint of shared-state parallelism, and the dashboard labels it.

Pattern 5

Symptom. A test completes green, then a later test fails with an error message that mentions something the earlier test was doing.

Root cause. A test that calls .then() without returning or awaiting the promise finishes before the promise resolves. The resolution lands in the next test's microtask queue, where it can mutate shared state, call a mock that now belongs to a different test, or trigger an unhandledRejection that Jest attributes to the wrong test.

test("fetches the user", () => {

fetchUser().then((u) => {

// this resolves after the test body has already returned

expect(u.name).toBe("Rémy");

});

});

Fix. Always await or return promises from test bodies. Turn on the @typescript-eslint/no-floating-promises rule to catch this in review.

test("fetches the user", async () => {

const u = await fetchUser();

expect(u.name).toBe("Rémy");

});

With Mergify. Test Insights reruns the failing test on a worker by itself. If the test passes alone but fails under the original schedule, the dashboard tags it with a 'sequencing-dependent' label and suggests the floating-promise pattern.

Pattern 6

Symptom. Your pipeline is green. Your users hit a bug in production that your tests were supposed to catch.

Root cause. jest.retryTimes(n) re-runs a failing test up to n times and reports the last result. A real bug that fails on the first attempt and passes on the second (because of caching, warm-up, or a race it happens to lose locally) gets reported as green. Retries at the Jest level are a tempting knob; they paper over the exact kinds of failures that CI is supposed to catch.

// jest.config.js (please don't)

module.exports = {

setupFilesAfterEach: ["<rootDir>/jest.setup.js"],

};

// jest.setup.js

jest.retryTimes(3, { logErrorsBeforeRetry: false });

Fix. Do not retry at the Jest level. When a test is genuinely flaky, diagnose and fix it. When diagnosis takes more than a session, quarantine it instead. That keeps the signal visible without blocking merges.

With Mergify. Test Insights reruns at the CI level with attempt-level result tracking. You see that a test passed on attempt 2 of 3, which is exactly the information retryTimes() throws away. Quarantine kicks in once the pattern is clear.

Pattern 7

Symptom. A test passes in your file but throws <code class="bg-gray-100 px-1 rounded">ReferenceError: document is not defined</code> in another file that tests the same component.

Root cause. Jest lets you choose between the node and jsdom environments per file with a @jest-environment pragma. Forget the pragma on a file that needs DOM globals and the test throws on load. Keep the pragma on a file that no longer needs DOM and you pay the jsdom startup cost on every run for no reason.

// ComponentA.test.tsx: has the pragma, works

/**

* @jest-environment jsdom

*/

import { render } from "@testing-library/react";

// ComponentB.test.tsx: forgot the pragma

import { render } from "@testing-library/react";

test("renders", () => {

render(<B />); // ReferenceError: document is not defined

});

Fix. Set testEnvironment: "jsdom" in jest.config.js for your UI test directory via projects, and leave node as the default for everything else. Per-file pragmas should be the exception, not the rule.

// jest.config.js

module.exports = {

projects: [

{ displayName: "unit", testEnvironment: "node", testMatch: ["<rootDir>/src/**/*.test.ts"] },

{ displayName: "ui", testEnvironment: "jsdom", testMatch: ["<rootDir>/src/**/*.test.tsx"] },

],

};

With Mergify. Environment misconfigurations manifest as consistent failures when the file runs alone, not as intermittent flakes. Test Insights still catches them through confidence scoring on the default branch, surfacing the file as broken rather than flaky.

Pattern 8

Symptom. The first test of a new CI run fails with a 'table does not exist' or 'fixture not found' error. A rerun of the same SHA passes.

Root cause. globalSetup runs once before the suite. If its function returns before the async work it kicked off is done, Jest starts your tests against a half-seeded database or a half-built temp directory. The first few tests fail; by the time the setup actually completes, later tests see a consistent world.

// setup.ts: the bug

export default function () {

seedDatabase(); // returns a promise, never awaited

}

Fix. Make the setup function async and await every side effect. Jest blocks until the returned promise resolves.

export default async function () {

await seedDatabase();

}

With Mergify. Test Insights notices that the first N tests of a run fail consistently only on cold CI workers, which is the fingerprint of a setup race. The dashboard flags the ordering and the subset of affected tests.